RedPajama replicates LLaMA dataset to build open source, state-of

4.6 (580) In stock

4.6 (580) In stock

RedPajama, which creates fully open-source large language models, has released a 1.2 trillion token dataset following the LLaMA recipe.

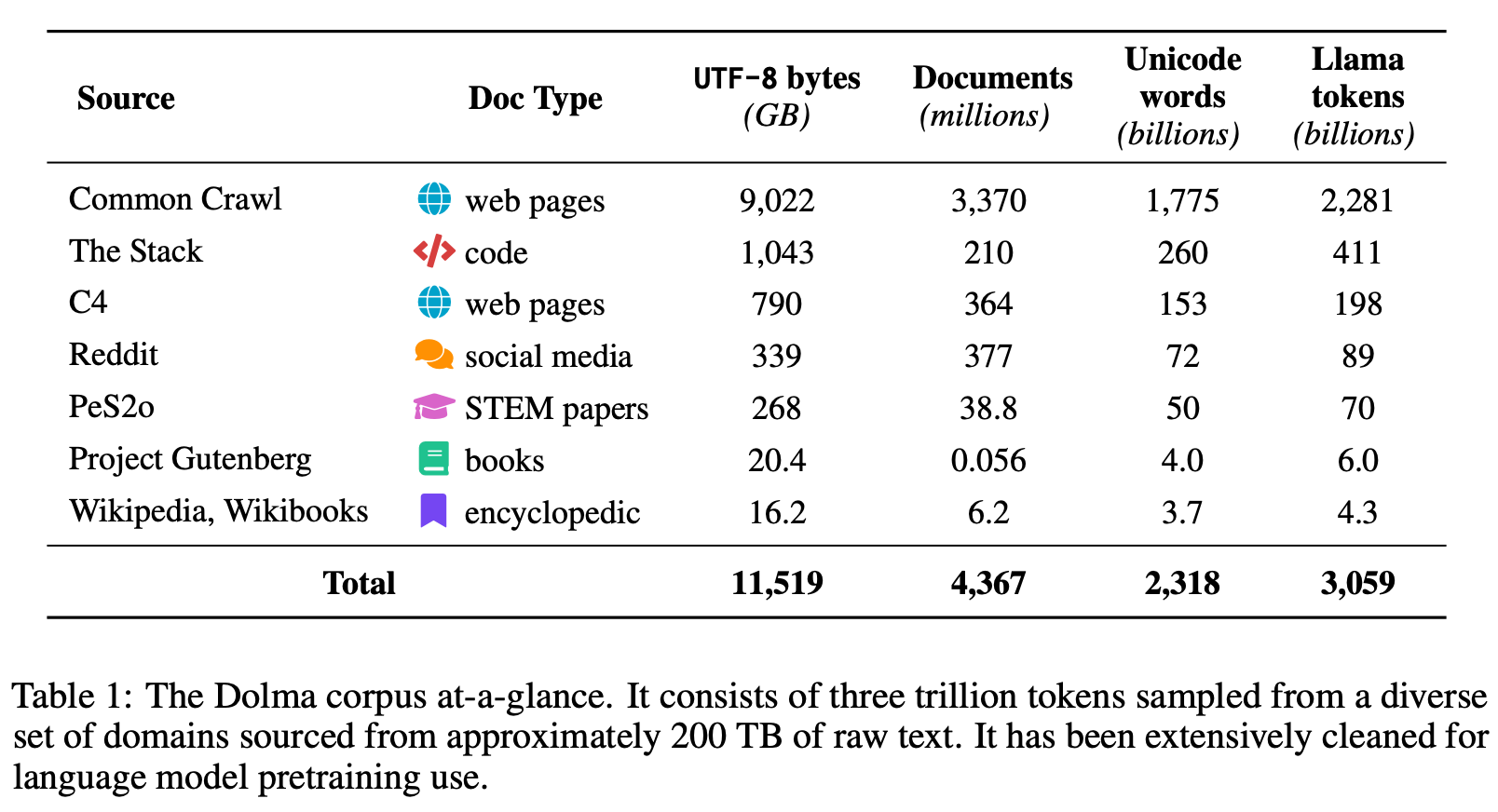

Dolma, OLMo, and the Future of Open-Source LLMs

Vipul Ved Prakash on LinkedIn: RedPajama replicates LLaMA dataset to build open source, state-of-the-art…

LLaMA clone: RedPajama – first open-source decentralized AI with

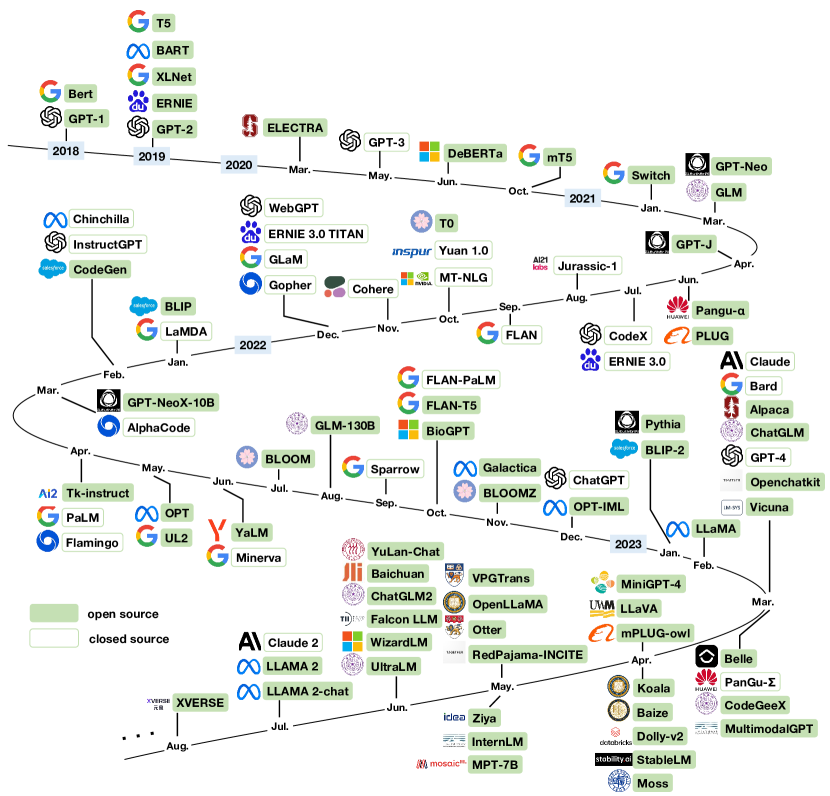

LLaMA Derivatives: The Latest On Meta's Open Source LLM

François Lafond (@FLCompRes) / X

RedPajama-Data: 重制LLaMA训练数据集的 来自爱可可-爱生活- 微博

togethercomputer/RedPajama-Data-1T-Sample · Datasets at Hugging Face

🎮 Replica News

2023 in science - Wikipedia

Casino Game: Las Vegas Casino Online

Open-Sourced Training Datasets for Large Language Models (LLMs)

2023 in science - Wikipedia

2308.14149] Examining User-Friendly and Open-Sourced Large GPT

2023 in science - Wikipedia

Why LLaMA-2 is such a Big Deal