BERT-Large: Prune Once for DistilBERT Inference Performance - Neural Magic

4.5 (370) In stock

4.5 (370) In stock

arxiv-sanity

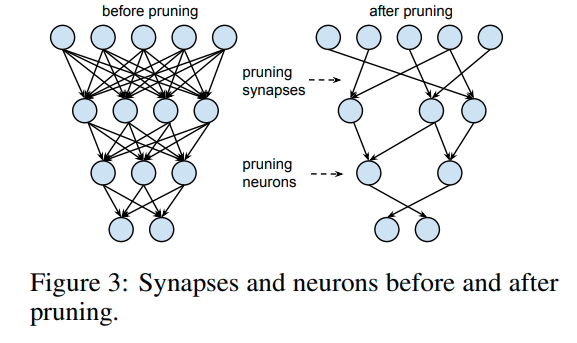

Neural Network Pruning Explained

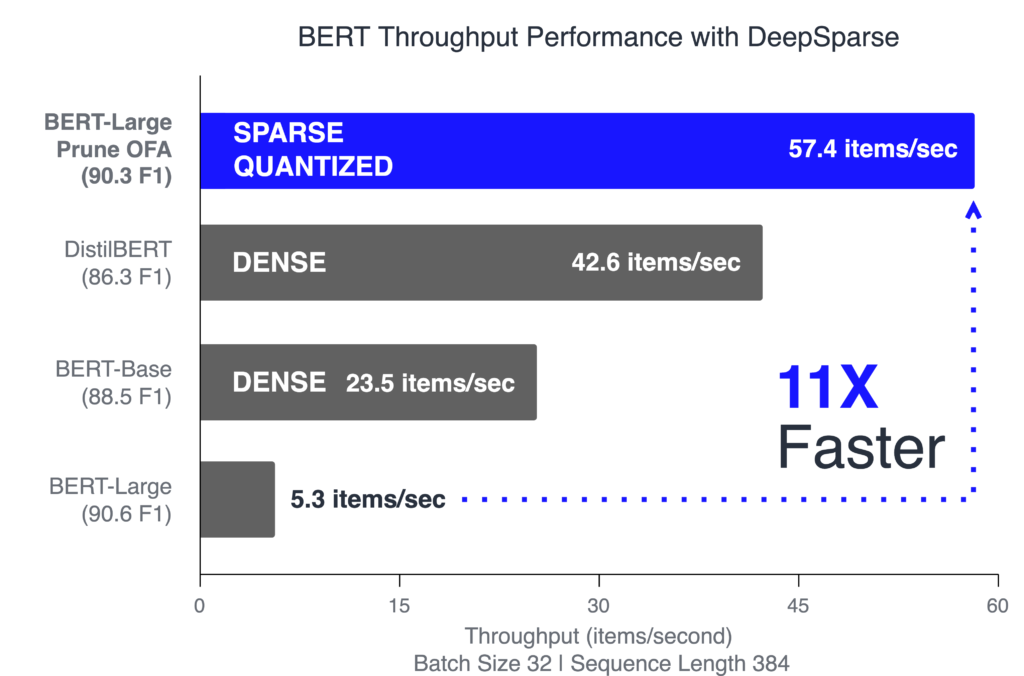

BERT-Large: Prune Once for DistilBERT Inference Performance - Neural Magic

miro.medium.com/v2/resize:fit:1400/1*tIkCREGvFWTIK

kevin chang on LinkedIn: Release Intel® Extension for Transformers v1.1 Release ·…

Mark Kurtz on LinkedIn: BERT-Large: Prune Once for DistilBERT Inference Performance

arxiv-sanity

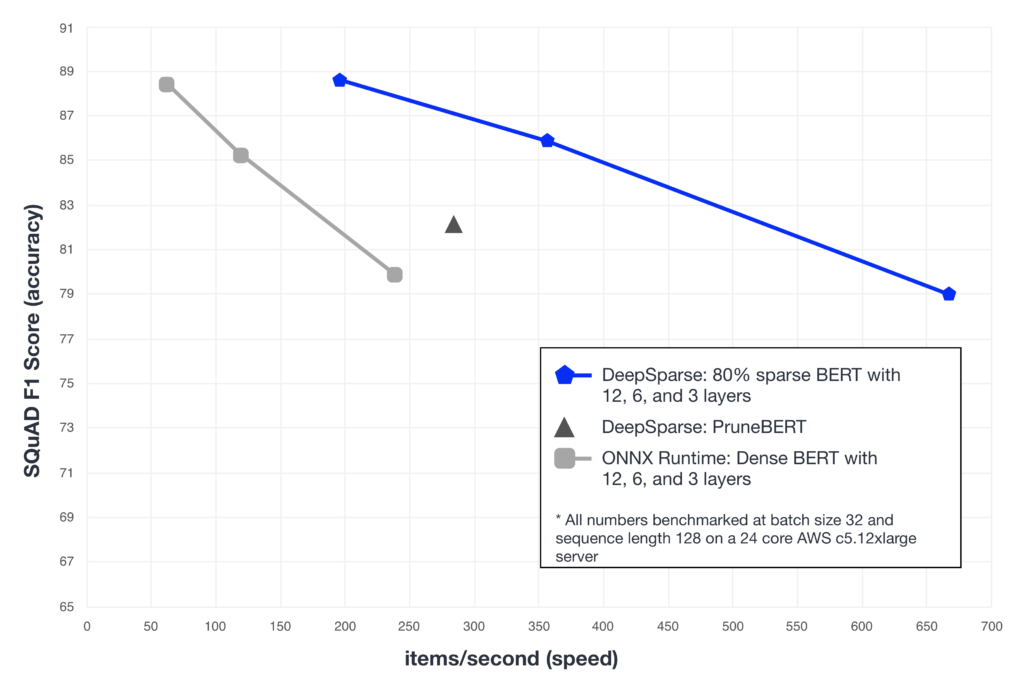

Pruning Hugging Face BERT with Compound Sparsification - Neural Magic

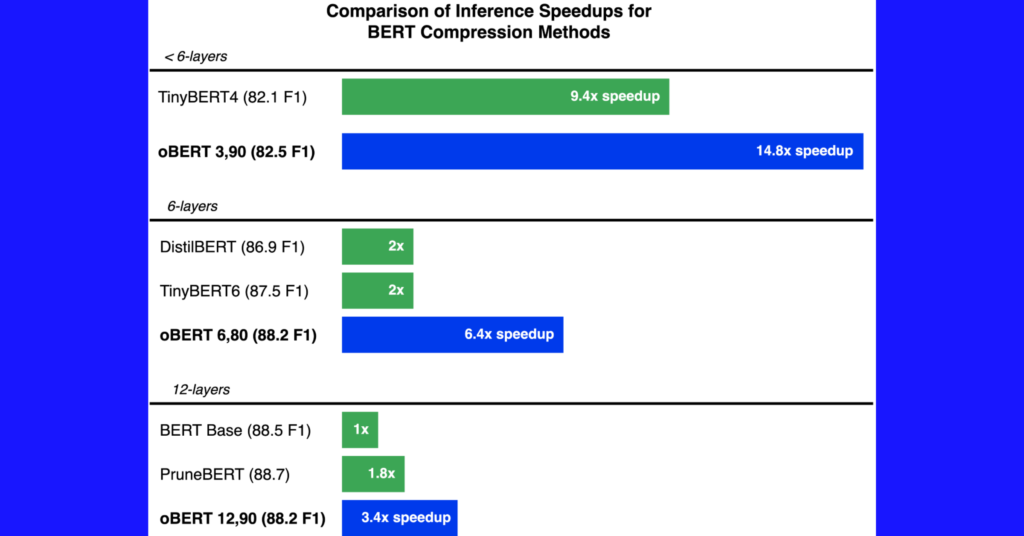

oBERT: GPU-Level Latency on CPUs with 10x Smaller Models

PDF) The Optimal BERT Surgeon: Scalable and Accurate Second-Order Pruning for Large Language Models

kevin chang on LinkedIn: Release Intel® Extension for Transformers v1.1 Release ·…